With the very real possibility of oil/gas prices climbing to catastrophic levels due to absolutely stupid military activity by the U.S. against Iran I decided to snap up an old Nissan Leaf while the getting was good.

There were quite a few options available when I started my search on the weekend of March 7/8. I made a short list, but didn’t see a way to fit anything into our schedule until the following weekend. On the morning of the 14th I called the broker in Walnut Creek with our top pick, but they said they already had someone scheduled to look at it and were going to hold it for them for a few hours. A couple hours later they called back to say the first person was unable to get the financing they needed so it was available to us.

We went up and drove it around a for a little bit and dropped it off at a shop for an inspection. While we waited we walked down to a 7-11 to buy some snacks and shortly after, it was ready for pickup. No major issues. Some age-appropriate wear and tear. So we bought it. $4500. Still has about 65 miles of range on a full charge–which is plenty for our around-town driving.

After we already said we would buy it the employee was telling us that they had 4 calls about it just that morning. It had been on their lot for 3 weeks and they even dropped the price once. I guess we weren’t the only ones thinking an electric vehicle sounded increasingly like a good idea.

Remediations

The car didn’t need anything to be drivable, but there were some easily remedied issues I took care of. Wiper blades and cabin air filter needed to be replaced. The location of the cabin air filter is super obnoxious. Of all the work I did it was easily the most annoying.

A strut on the hatchback was leaking, $20 for a pair of new struts and replacing them took less than a minute.

The driver’s door must have had some work done on it at some point, though no accident appears on the vehicle history. One of the brackets that holds the door on wasn’t paint-matched and the door didn’t hit the strike plate quite perfectly. It was only barely offset and my regular shop adjusted the strike plate so that it matches up just right.

The cargo area has a cover which hooks onto the hatchback to lift it up when the hatch opens. One of the nubs which it connects to on the hatch trim had snapped off. You can’t buy the part anymore and rather than try to find one from a junkyard I made my own solution. I found something on Amazon described as a 10mm ball-head stud rivet with screw back. I pulled the trim off (very carefully) and filed down the broken nub. I drilled a hole just big enough for the screw on the back piece of the rivet. To fit a washer, in order to distribute the load across more of the plastic, I had to cut out a little rib of plastic, but it all went together beautifully.

The 2015 Leaf allows you to schedule when charging can happen, which is important for our electric metering which has peak and off-peak rates. When I went to set it up the computer was complaining something about the clocks being out of sync: “The clock used for the timers is different from the navigation system’s GPS clock. Please synchronize the timer’s clock with the GPS clock to use the timers.” Apparently, the car and the navigation system each have their own clock and if they’re out of sync it won’t allow you to set up schedules. Anyway, it then gives you a button to press to “resync the timers” and when I pressed it it threw an error and told me to try again or visit a service center.

After a few tries with the same result I turned to the internet to begin sleuthing out more information. I found a number of posts about the issue, with some guidance about using the secret, service menu to set the vehicle clock manually. The theory is that if the clocks are too far out of sync it will fail to automatically synchronize them when you push the button. Why that would be the case, who knows. It seems easier to say, “I got a reliable GPS signal, use that info to set all of the clocks!” but that’s not what it does.

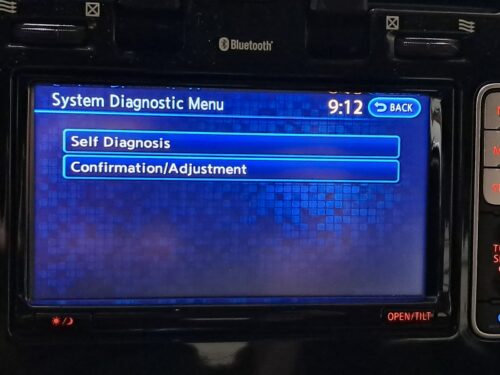

The big question is, “How close do they have to be for the sync to work?” But first, how to access the secret, service menu: With the Audio Power off, press “Map” 3 times (waiting for the double beep each time), press “Audio Power” 2 times, press “Map” once. That should take you to this menu:

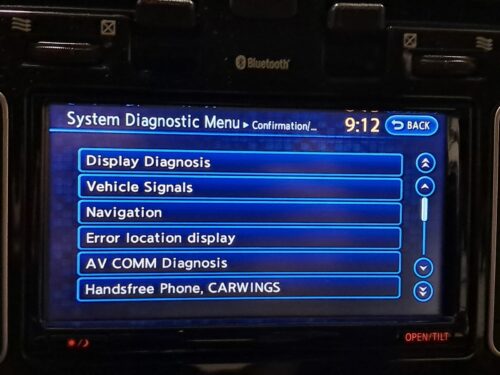

Go to “Confirmation/Adjustment”:

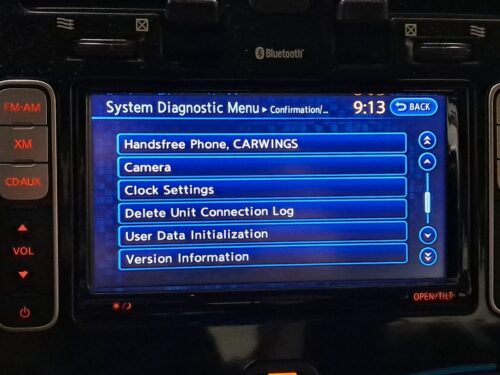

Scroll down to “Clock Settings”:

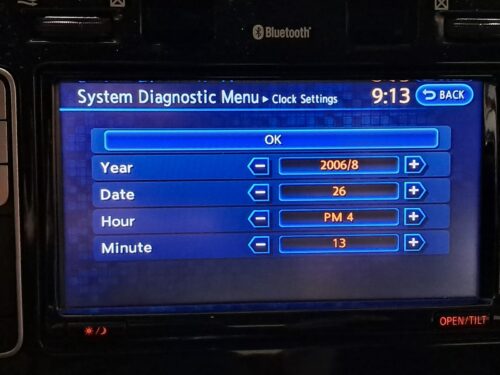

The “Clock Settings” page looks like this:

Based on my experience, this menu always shows Year 2006/8 and Date 26 when opened–regardless of what it’s actually set to. “Year” is “Year/Month” and “Date” is “Day of Month.”

So, we can use this menu to set the vehicle clock. But set it to what? When I set this information to the current date/time I saw the clock on the dashboard change…to something not what I set. There was something suspicious about the time it was showing, but after a couple of minutes it would update to the correct time (presumably after the GPS signal was processed). Regardless, I still couldn’t get it to “resync the timers.”

I then realized the key. One of the main forums on which I was reading about the issue is based in the UK and the time it was temporarily showing me was the correct offset from UTC (though in the wrong direction). The secret, service “Clock Settings” page must be set to the correct UTC time. It was working for the UK users to set their regular correct time because it just so happened to also be UTC. Once I set it to UTC, “resync the timers” worked and the charging scheduling is working beautifully.

I couldn’t find any documentation stating this anywhere on the Internet. So here’s my incremental contribution to the world’s knowledge.

Charging Stand

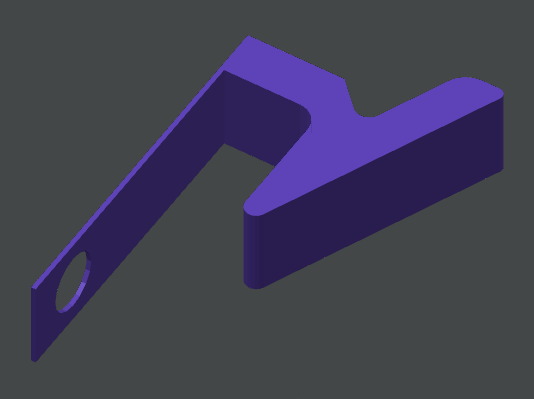

Finally, to make life a little more convenient, I built a little stand for the charger. I was going to use pressure-treated wood and paint it, but it was all in terrible condition. Corinne convinced me to buy the redwood sitting next to it instead. I like the color, so I’ll keep it.

I’m very pleased with the purchase. I like how it drives. I like how quiet it is. I like that I have a small sliver of the “future we were promised” of electric vehicles powered by sunshine–directly from the solar panels on our house!